Machine learning can seem like an intimidating subject to understand. Typically this would require being proficient at advanced math such as linear algebra, calculus, and statistics. However, even without knowing what goes on under the hood, it’s possible to make use of it to solve business problems. You don’t fully understand every aspect of your car, yet you drive it. The same goes for machine learning.

AutoML is a product made by Google, which allows pretty much anyone to use machine learning because it looks at your data and builds a model automatically. The techniques it uses are quite sophisticated, and I won’t be discussing that here. Instead, I’ll focus on being pragmatic and show you how to use it without the math background. We’ll go over an example AI project that reads product reviews and classifies them as either negative or positive. We can then use this to predict whether what someone is saying about our products in other places such as twitter, forum posts, etc are negative or positive. New reviews may then be processed and automatically categorized.

How is an AI Model Created?

You only need two things to train a model:

- Data - this is something you provide. Luckily, almost everyone can provide data of some kind.

- A sophisticated means of training a model using that data. Luckily AutoML has you covered for this part, so you don’t need to worry about this.

To train AI models, nearly anything may be used as input. Any point of data that truly represents some category is a valid input. As an example, say you want to automatically scan reviews of a product and understand whether people are generally more positive or negative in their reviews. To provide data for this example task, we need to give labeled examples of reviews. All that means is to put a label (indicating positive or negative) on a bunch of reviews. The computer takes this input and calculates an understanding of negative and positive when reading a review.

Training Data

Training data is what we call the data that our model uses to learn. It’s a bunch of examples of judgment on data. For our purpose of reading a review and judging whether it’s a positive or negative review, the example data could consist of a review comment and a label saying whether that review is positive or negative. That label is set by a human after reading the review to classify it. So basically, you’d just need a bunch of examples and a label on each of them saying either “positive” or “negative”.

In what format are these examples in you ask? It doesn’t matter as long as your data pipeline understands it. For AutoML, I like to use CSV files because they’re easy to understand. Think of a CSV file like a table of data. The first two lines are your column headings, and each line below represents data that should be in that cell. Commas separate the cells. In the table below, we have two columns, ReviewText, and Sentiment. The ReviewText represents the review comment left by the reviewer. The right column is Sentiment, where I’ve chosen 1 to represent a positive review and 0 to represent a negative review.

example-data.csv

ReviewText, Sentiment

"This product is garbage and I'd recommend nobody ever buy it!", 0

"It solved all my problems in life, I had to order another one as a gift for my wife so all her problems could be solved too! I'd highly recommend!", 1

"I wish I'd known about this sooner. It works as it should, and I'm happy with it.", 1

"Mine broke, and I found out there's no warranty. Seem to be cheaply made and poor build quality.", 0

As you can see in this example, the reviews are blatantly positive or negative. The unmistakable connotations are only for clarity’s sake. In reality, it’s a good idea to have lots of reviews that sit on a fine line between the decision boundary. This means it’s good to have reviews that are mixed positive and negative but label them carefully in the most substantial direction. The more examples you can provide, the better. These examples should be authentic examples, from actual reviewers. You don’t want to make this up if you can help it.

Ideally, you’ll have lots of examples. Lots. Like hundreds or even thousands for some advanced types of problems. If you’re trying to solve a particularly tough problem, it may even take more than that! The more data you can provide and the more accurate the labeling is, the higher the models’ accuracy for future predictions. If you find the results are particularly bad when you get to the testing phase, try collecting more data, and labeling it.

Upload CSV to Google AutoML and Train a Model

Now that we have our data prepared in a CSV file, with the first column being our text and the second column being a label (0 for negative and 1 for positive), we now need to upload our data to AutoML. First, we must create a new dataset.

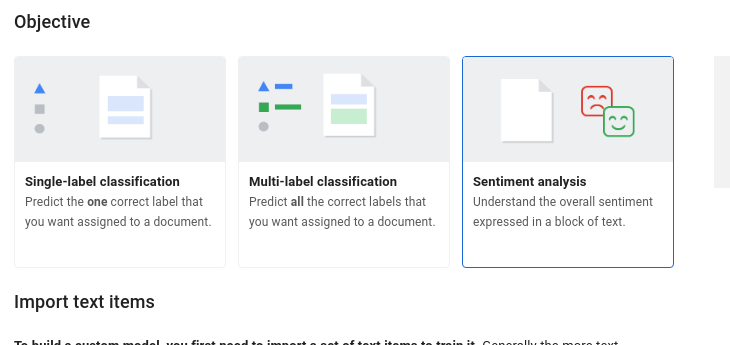

Click the NEW DATASET button, give your dataset a name and for the objective, be sure to select either Single-label classification or Sentiment analysis. Select your CSV file and upload it, then click CREATE DATASET.

Once our data is uploaded, AutoML analyzes our data and builds a model to predict the category for future data. This process is called training. Training typically takes several hours or longer, depending on how much data you have. You’ll receive an email once AutoML has completed training your data. Sometimes there are errors with training because there’s something wrong with your data, such as incorrect CSV formatting. You may have to fix it and re-upload the data again.

Testing your model

Before training, AutoML carves out of a small portion of your dataset and sets it aside. This small portion of data is called test data. The test data won’t be used for training and is only used at the end of training to test the model and calculate various metrics. The metrics include accuracy, precision, and recall. As mentioned, you can also type in some new test data to see how the model reacts under various circumstances.

Applying your model

At this point, the technology can prove itself. The next steps are where it may benefit your organization to hire a software developer. A competent software developer can integrate the model with any source of data. This integration is called a data pipeline which further improves the accuracy of your model over time. The software developer can also develop the software required to utilize your model to gracefully intake new input and return the result or use the result to perform some specific action.